Claude skills for SEO: 11 we open-sourced on GitHub

11 Claude skills for SEO we use at InhouseSEO: page audits, content briefs, keyword plans, E-E-A-T scoring, linkbuilding. Free on GitHub, Apache 2.0.

GSC is the most valuable free SEO tool. But it has hard limits that cost you rankings every day. Here's what you're not seeing.

Google deletes your Search Console data after 16 months. If you connected GSC in January 2025, everything before September 2023 is already gone. No export. No backup. No warning email. Just gone.

And that’s only the most obvious limit. Google Search Console is the only SEO tool that shows real data. Real clicks, real impressions, real positions from Google itself. Everything in Ahrefs and Semrush is estimated. But GSC has hard limits that Google never talks about, and those limits are silently costing you rankings every single day.

Here’s what’s actually missing from your GSC data, and what happens when you fix it.

Google silently deletes your Search Console history on a rolling 16-month window. This isn’t a bug or a storage limitation you can work around. It’s by design.

What this means in practice:

Most site owners don’t realize this until they need the data and it’s already been wiped. By then, there’s nothing to recover.

InhouseSEO stores every data point permanently from the day you connect. Every click, every impression, every position change. Stored forever, fully searchable. But the real difference isn’t storage. It’s that Claude analyzes your historical data automatically. Instead of staring at year-over-year spreadsheets, you ask “how did my top pages perform compared to last year?” and get: “Your top 5 pages grew 34% YoY, but blog content declined 12%. These 3 posts are decaying fastest. Here’s the fix for each.”

GSC exports cap at 50,000 rows per report. Sounds like a lot until you realize that every unique keyword-page-country-device combination counts as one row.

A site with 200 pages easily generates 100,000+ row combinations. A site with 1,000 pages? You might be seeing less than 10% of your actual keyword data.

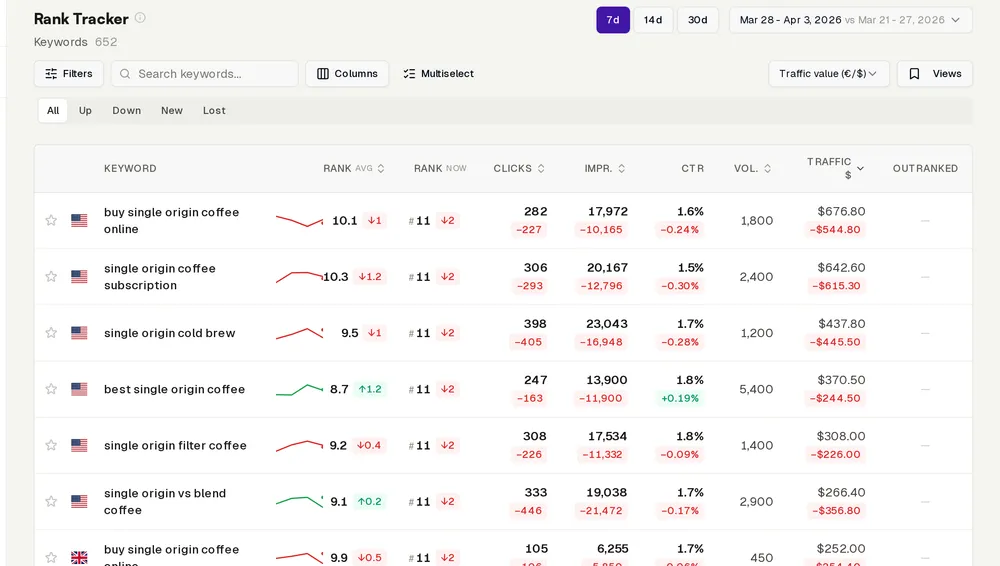

The rows GSC cuts are always the long-tail keywords, the ones with lower impressions and clicks. These are exactly the keywords where quick wins hide. The keyword you rank #11 for with zero optimization. The page that needs one paragraph added to jump from position 15 to position 8. The content gap your competitors haven’t covered yet.

If you can’t see it, you can’t fix it.

InhouseSEO pulls your complete dataset through the API. Every long-tail keyword, every page, every combination. Then Claude does what would take you hours: scans the full dataset for quick wins, cannibalization issues, and ranking opportunities hiding in the long tail. One question (“where are my quick wins?”) replaces what an agency would call a “low-hanging fruit audit” and charge €500 for.

This is the limit nobody talks about, because it’s so fundamental people just accept it.

GSC is a reporting tool. It tells you what happened. It never, ever tells you what to do about it.

Your page dropped from position 3 to position 7. GSC shows you the graph. Now what? You have to:

That workflow eats 15-20 hours per month. Before you fix a single thing. This is exactly what SEO agencies sell: not the data (you already have it), but the expertise to interpret it and the discipline to act on it consistently.

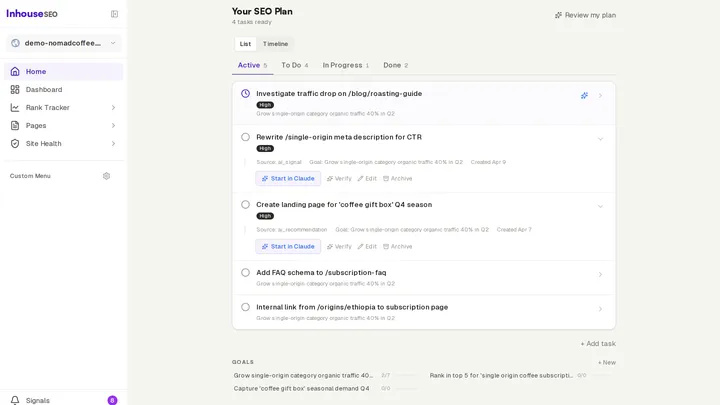

The question is whether you need a human agency for that, or whether Claude with your real data can do the same job. After watching thousands of sites go through this, the answer is clear: for strategy, analysis, and planning, Claude with your real data matches or beats most mid-tier agencies.

Here’s a concrete example. A B2B SaaS company connected GSC through InhouseSEO. Within the first conversation, Claude found:

That’s one conversation. Not a month-long audit. Not a €3,000 consulting engagement. One question to Claude: “What should I work on first?”

Here’s specifically what changes when you connect GSC through InhouseSEO instead of using it directly:

Permanent data storage. Every data point saved from day one. Compare any time period to any other time period, forever. No more 16-month cliff.

Complete keyword data. Full API pull, no 50,000 row cap. Every long-tail keyword visible and analyzed.

Competitor context. Weekly tracking of competitor sitemaps and SERP positions. When you drop, Claude tells you exactly who took your spot and what they did differently. Not just the “what.” The “why” and the “how to respond.”

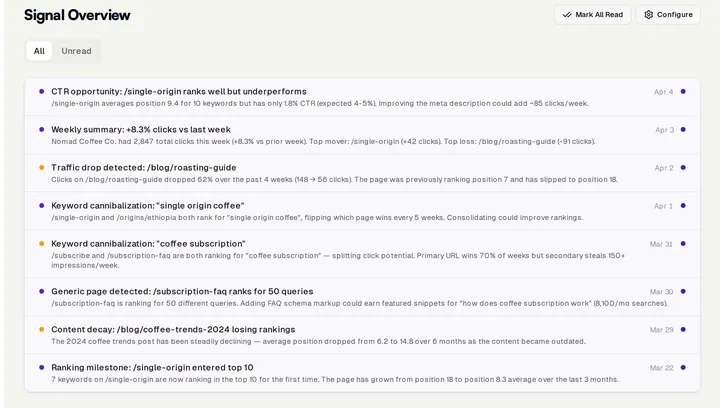

Proactive alerts. Daily monitoring with same-day alerts when something significant moves. Not every position change. Claude filters noise and only flags what matters, with recommended actions attached.

Content planning from real data. Quarterly content strategies built from your actual ranking data and competitor gaps, not guessed keyword volumes from third-party tools. SERP-analyzed briefs for every recommended piece.

Linkbuilding strategy. Competitor backlink analysis with specific outreach targets and angles, based on who links to content like yours but hasn’t linked to you yet.

Technical audits. Continuous monitoring of crawl errors, indexing issues, and Core Web Vitals, with plain-language explanations of what’s actually hurting your rankings and how to fix it.

All of this runs on your real Google data. The same data you already trust, just with the expertise layer that GSC was never built to provide.

For €49/mo, you get what agencies charge €2,000+ to deliver. The full Ahrefs comparison breaks down exactly where estimated data falls short versus real Google data.

Every day you use GSC alone, you’re losing historical data, missing long-tail opportunities, and spending hours on analysis that Claude can do in seconds.

Connect your Search Console through InhouseSEO. Ask Claude what you’re missing. You’ll find out in your first conversation.

Share this article:

11 Claude skills for SEO we use at InhouseSEO: page audits, content briefs, keyword plans, E-E-A-T scoring, linkbuilding. Free on GitHub, Apache 2.0.

Connect Claude to your real Google Search Console data and turn it into a senior SEO specialist. Step-by-step guide with real examples.

Every SEO tool floods you with charts and numbers. None of them tell you what to actually do. Here's why, and what's changing.