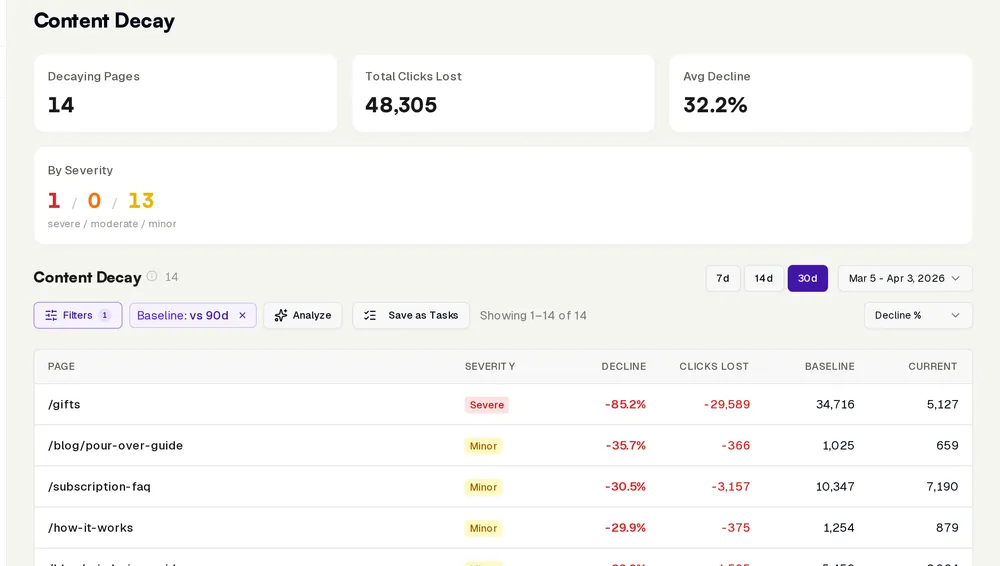

Your best blog post ranked position 2 for eight months. Last week it dropped to position 9. You didn’t notice until a colleague mentioned it.

That’s content decay. Not a sudden crash. You’d catch that. It’s the slow bleed. Two positions this month, one more next month. By the time it shows up in your traffic overview, you’ve been leaking clicks for months. At Keuze.nl I watched it happen dozens of times: pages that held a top-3 spot for months, quietly sliding down while nobody paid attention. Until traffic had been cut in half.

What content decay is (and why you miss it)

Content decay is the gradual loss of rankings and traffic for content that used to perform well. No Google penalty. No technical issue. Your content simply becomes less relevant while the world around it moves on.

Here’s what makes it dangerous: it never shows up in your daily metrics. A page getting 100 clicks a day that slowly loses 2 per day looks fine on your dashboard. Until three months later you’re at 40 and wondering what went wrong.

Most teams only discover content decay when someone happens to pull a traffic report and thinks: “Wait, didn’t that page do better?” By then the damage is done.

The 3 reasons your content ages out

Content decay almost always comes down to one of three causes:

1. Competitors publish something better. Someone wrote a more thorough, more recent article than yours. Google noticed and started ranking their version higher. You didn’t see it because you were busy creating new content instead of protecting what you already had.

2. Search intent shifts. The query stays the same, but what people expect changes. “Best CRM software” in 2024 needed a different article than in 2026. The SERP changes. Videos appear, featured snippets shift format, new players enter the market. Your content no longer matches what Google wants to show.

3. Freshness matters. For many queries, Google favors recent content. An article last updated 18 months ago competes poorly against one from last week. Not because it’s worse, but because Google treats “fresh” as a quality signal. Especially in niches where information changes fast.

Why manual checking doesn’t work

“We check our rankings every month.” Great. You’ve got 200 published pages. Each targeting multiple keywords. That’s hundreds of data points you need to review monthly, compare against prior periods, and interpret.

In practice, everyone looks at the top 10-20 pages and hopes the rest is fine. It isn’t.

Content decay hits exactly the pages you’re not watching. That page sitting at position 5 for a mid-volume keyword. Not important enough for your monthly review, but still good for 300 clicks a month. Now it’s at position 14, pulling in 20. You won’t notice until someone stumbles across it by accident.

Manual monitoring doesn’t scale. Period.

How InhouseSEO detects and fixes decay

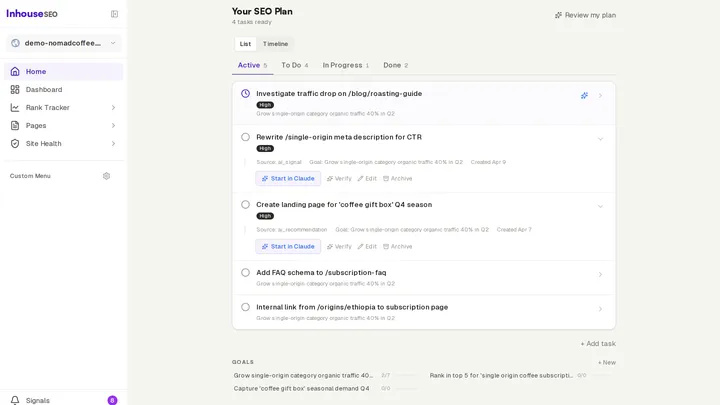

Most tools stop at detection. “This page is declining.” Thanks. That’s like your doctor saying “you’re sick” without a diagnosis or treatment plan. What InhouseSEO does differently is the full trajectory, from detection to fix:

Detection based on your baselines. Not arbitrary thresholds (“more than 20% drop = alert”). InhouseSEO knows the normal pattern for every page. A page that typically gets 50 clicks/day dropping to 35 is a bigger problem than one going from 8 to 5. The system compares each page against its own history over 4-8 weeks. Short enough to catch problems early, long enough to filter out seasonal noise.

Root cause analysis. Not “your page is declining” but why. Claude analyzes three things: has a competitor recently published something better? Has search intent shifted (different SERP features, different content types)? Or is it purely a freshness problem? Each cause requires a different fix.

Content comparison. Claude compares your page against what’s actually ranking now. Not vaguely. Specifically. “The top 3 results all cover [specific topic] that your article doesn’t mention. Two of the three have a section about [other topic] with concrete numbers.” You know exactly what you’re missing.

Complete content brief. No abstract advice. A brief with exact sections: which to add, which to rewrite, which to cut, which to merge. With the search intent spelled out so you know what you’re writing toward. Including entity mapping and questions the top results answer.

Internal link suggestions. Claude scans your site and identifies pages that could link to the weakened page. Concrete suggestions: which page, what anchor text, where in the content. Internal links are one of the fastest ways to boost a declining page.

Link building plan. If the cause is authority loss (your competitor has more and better backlinks), you get a plan. Which sites link to competing pages but not yours? What are concrete outreach angles to earn those links?

Sometimes the fix is simple: update the numbers and republish. Sometimes you need to consolidate: merge three weak pages into one strong one. Sometimes a page has served its purpose and you redirect the URL. The point: you don’t have to figure it out yourself.

Preventing content decay: the system

Fixing reactively is good. Preventing proactively is better.

InhouseSEO monitors your entire site continuously. Not once a month when you remember to. Every day. You get weekly alerts when something changes that needs action:

- A page declining two weeks in a row

- A competitor publishing new content for your most important keywords

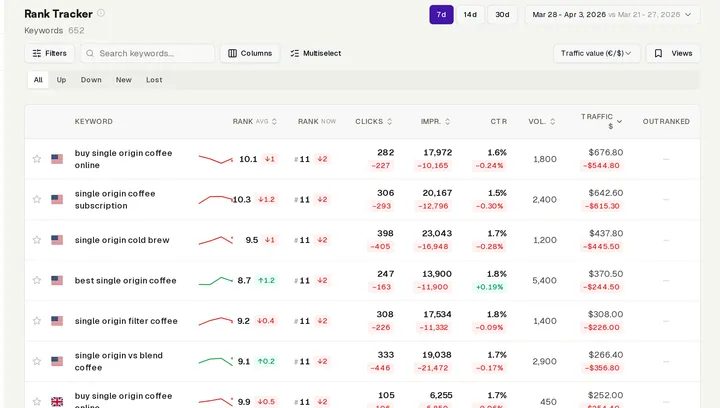

- Keywords showing position changes that indicate decay

- Pages where CTR drops while positions stay stable (title/description needs a refresh)

You don’t need to open dashboards. You don’t need to check anything. If action is needed, you’ll hear about it. If everything’s fine, you won’t be bothered.

What this saves you in practice

Let’s be honest about the alternatives.

Manual: 3-5 hours per week on data analysis, content audits, and tracking rankings. For a site with 200+ pages, that’s the minimum. And you’ll still miss things.

SEO agency: $2,000+/month. They’re thorough, but you’re paying for human hours. A quarterly review, monthly reporting, and reactive fixes when you ask for them.

InhouseSEO: $49/month. Continuous monitoring, automatic detection, instant diagnosis and complete fix plans. Not monthly. Daily. Not your top 20 pages. All of them.

The difference isn’t that we’re cheaper. The difference is that an AI that knows your site can monitor 24/7 without getting tired, without missing things, without forgetting. Compare it to how Ahrefs handles this and you’ll see where traditional tools fall short.

Content decay is inevitable. Failing to catch it in time isn’t.

Start your free trial ->